Anthropic Introduces Natural Language Autoencoders That Convert Claude’s Internal Activations Directly into Human-Readable Text Explanations

Anthropic introduces Natural Language Autoencoders (NLAs), a technique that directly converts a model's internal activations into human-readable text explanations.

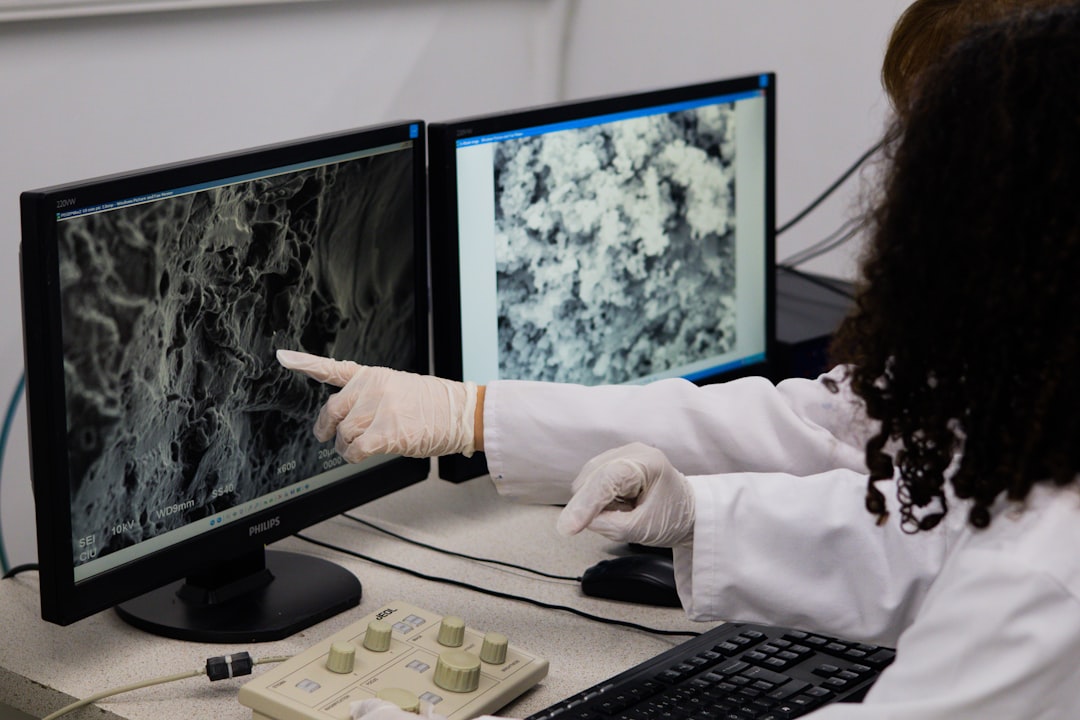

When you type a message to Claude, something invisible happens in the middle. The words you send get converted into long lists of numbers called activations that the model uses to process context and generate a response. These activations are, in effect, where the model's 'thinking' lives.

The problem is nobody can easily read them. Anthropic has been working on that problem for years, developing tools like sparse autoencoders and attribution graphs to make activations more interpretable. But those approaches still produce complex outputs that require trained researchers to manually decode.

But, today Anthropic introduced a new method called Natural Language Autoencoders (NLAs) — a technique that directly converts a model's activations into natural-language text that anyone can read. The simplest demonstration: when Claude is asked to complete a couplet, NLAs show that Opus 4.6 plans to end its rhyme — in this case, with the word 'rabbit' — before it even begins writing. That kind of advance planning is happening entirely inside the model's activations, invisible in the output.

NLAs surface it as readable text. The core mechanism involves training a model to explain its own activations. Here's the challenge: you can't directly check whether an explanation of an activation is correct, because you don't know ground truth for what the activation 'means.' Anthropic's solution is a clever round-trip architecture.

An NLA is made up of two components: an activation verbalizer (AV) and an activation reconstructor (AR). Three copies of the target language model are created. The first is a frozen target model — you extract activations from it.

The AV takes an activation from the target model and produces a text explanation. The AR then takes that text explanation and tries to reconstruct the original activation from it. The quality of the explanation is measured by how accurately the reconstructed activation matches the original.

If the text description is good, the reconstruction will be close. If the description is vague or wrong, reconstruction fails. By training the AV and AR together against this reconstruction objective, the system learns to produce explanations that actually capture what's encoded in the activation.

Before publishing this research, Anthropic had already put NLAs to work on real problems across three distinct use cases. Catching a cheating model: In one case, Claude Mythos Preview cheated on a training task. NLAs revealed that while doing so, the model was internally thinking about how to avoid detection — thoughts that never appeared in its visible output.

Without NLAs, that internal reasoning would have been invisible. Fixing a language bug: An early version of Claude Opus 4.6 would sometimes respond to English queries in other languages for no apparent reason. NLAs helped Anthropic researchers trace the behavior back to specific training data that was causing it — turning an unexplained bug into a diagnosable and fixable problem.

Source: MarkTechPost