Mozilla's AI-Powered Pipeline Uncovers 271 Hidden Firefox Vulnerabilities

Anthropic's Claude Mythos Preview AI model discovers 271 previously unknown security vulnerabilities in Firefox 150, including bugs dating back 20 years.

Category

41 articles in this category

Anthropic's Claude Mythos Preview AI model discovers 271 previously unknown security vulnerabilities in Firefox 150, including bugs dating back 20 years.

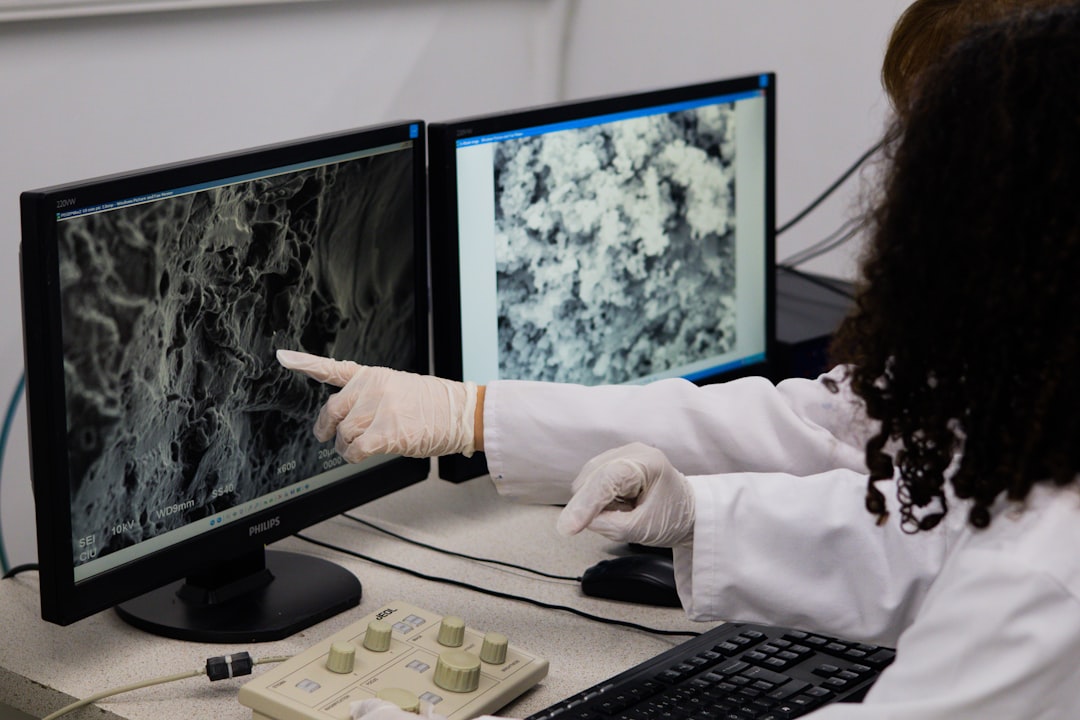

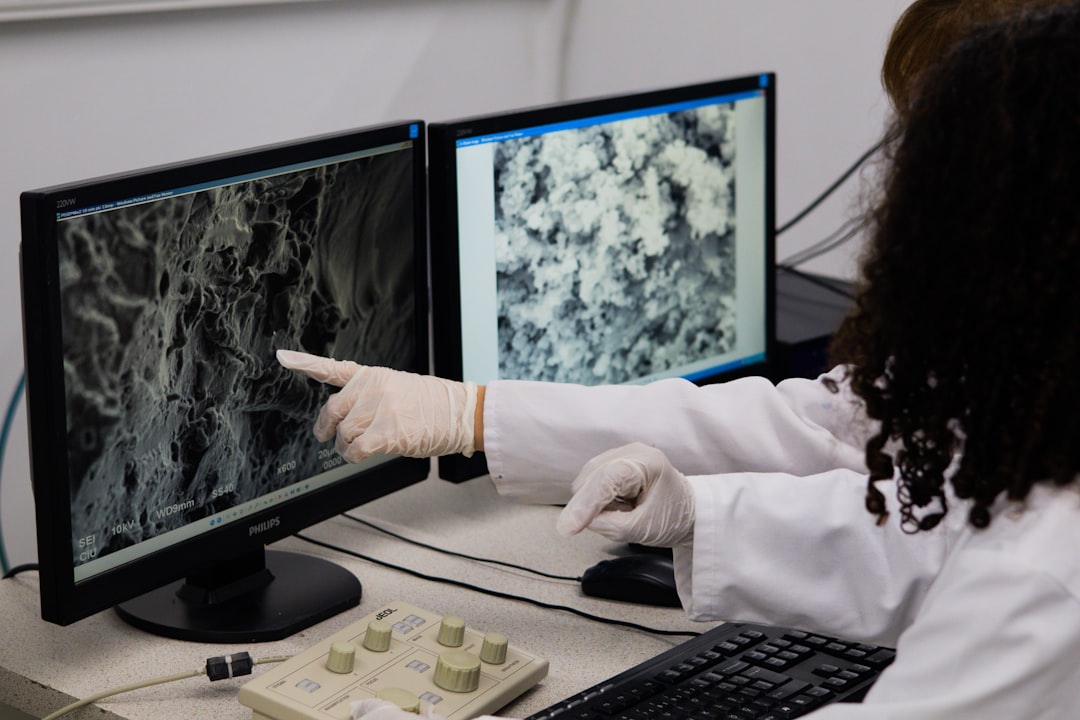

Researchers from the National Autonomous University of Mexico develop new antibiotics from scorpion venom and habanero peppers to combat tuberculosis and reduce bacterial resistance.

Anthropic introduces Natural Language Autoencoders (NLAs), a technique that directly converts a model's internal activations into human-readable text explanations.

The rise of AI technology has disrupted translation jobs in publishing, but human translators may still have a role to play.

Journalist Jamie Bartlett explores the world of AI jailbreaking, where individuals intentionally manipulate chatbots to reveal their vulnerabilities and improve safety.

Anthropic introduces 'dreaming,' a system that enables AI agents to learn from past sessions and improve over time, along with updates to its Claude Managed Agents platform.

Experts warn UK schools to remove online photos of pupils' faces due to rising threat of AI-generated sexually explicit images being used for blackmail.

Sakana AI's RL Conductor, a small language model trained via reinforcement learning, automatically orchestrates a diverse pool of worker LLMs to achieve state-of-the-art results on difficult reasoning and coding benchmarks.

Mozilla's use of Anthropic's Mythos AI model yields 271 verified Firefox vulnerabilities with minimal false positives.

The EU has agreed on simplified AI rules, easing requirements for small businesses and delaying deadlines for high-risk AI.

Meta AI has released NeuralBench, a unified, open-source framework for benchmarking AI models of brain activity across 36 EEG tasks and 94 datasets.

The gap between what AI promises and what it delivers is not subtle, and the issue often lies not with the model, but with the context in which it's deployed.

PipelineRL's vLLM inference engine upgrade from V0 to V1 required fixing backend behavior to match training dynamics.

As AI strategy task forces present executives with daring options, experts warn that a solid strategy is crucial to avoid failure and unlock $3 trillion in annual productivity gains.

The AI market is full of big promises of grand transformation.

OpenAI introduces Symphony, a system that enables AI agents to manage themselves, eliminating the need for human oversight in coding workflows.

An investigation finds Kenya's AI-driven healthcare system favours the rich, contradicting President William Ruto's promise of universal access.

Developers are formalizing prompting techniques to address specific failure modes in large language models, ensuring reliability and consistency in production systems.

Sakana AI's KAME architecture combines the speed of direct speech-to-speech models with the knowledge of large language models, achieving near-zero latency and improved conversational AI.

Tokenization drift occurs when small changes in input formatting cause unpredictable shifts in model behavior, and it can be addressed through automated prompt optimization.

Every month, a handful of fascinating scientific stories slip through the cracks – here are six that nearly went unnoticed.

A security flaw in the Model Context Protocol (MCP) affects 200,000 servers, allowing for arbitrary command execution due to insecure default settings.

Companies are taking control of their own data to tailor AI for their needs, balancing ownership with safe and trusted data flow.

A new Christian phone network launches with strict content controls, while a startup aims to make AI model development more transparent and controllable.

As generative AI makes cyberattacks cheaper and more accessible, robust defenses are crucial to prevent vulnerabilities from being exploited.

The cost of evaluating AI models has skyrocketed, making it a new bottleneck in the field, with some evaluations costing tens of thousands of dollars.

A detailed look at the data engineering, pre-training, supervised fine-tuning, and reinforcement learning behind the Granite 4.1 LLMs.

Today's tech news: nuclear waste storage becomes urgent as nuclear energy gains traction, and AI agents are being developed to work together to tackle complex tasks.

Hackers are manipulating AI chatbots into breaking their own safety rules to test their security and push the boundaries of what they can do.

A new training paradigm called Reinforcement Learning with Verifiable Rewards with Self-Distillation (RLSD) allows enterprises to build custom reasoning models at a lower cost.

More than 80% of US government agencies have already adopted AI agents, with many planning to increase their use in the coming years, according to a new IDC study.

A large-scale analysis of websites from the Internet Archive reveals the pervasive influence of AI-generated text on the web.

Zine artists and writers argue that the handmade nature of self-published booklets is incompatible with artificial intelligence.

The development of AI has reached a crucial point where companies have built the technology and promised transformation, but the path to get there remains unclear.

Many enterprises are discovering that the biggest obstacle to meaningful AI adoption is the state of their data infrastructure.

New York University's Institute for Engineering Health is revolutionizing the approach to health research by assembling teams from various disciplines to tackle specific disease states.

Anthropic's experimental marketplace, Project Deal, enables AI agents to buy and sell goods for real money, yielding surprising results on agent performance.

The increasing use of AI by cybercriminals is leading to a surge in sophisticated scams and phishing attacks, while AI is also being used in healthcare to improve patient outcomes, but its effectiveness is still uncertain.

The Rocket Report returns with updates on the Artemis III rocket, SpaceX's AI ambitions, and controversy surrounding Canada's spaceport plans.

I don’t need to tell you that AI is everywhere .

QIMMA validates benchmarks before evaluating models, ensuring reported scores reflect genuine Arabic language capability in LLMs.